2024

Structures of Being – Sofia Crespo at Casa Battlo, Barcelona

Robert composed an algorithmic piece as part of Sofia Crespo’s projection mapping on the Antoni Gaudi building Casa Battlo in Barcelona. The piece was performed by Cosmos Quartet and Juan De La Rubia. It was recorded at the Palau De La Musica, Barcelona.

Present Shock, with Robert Del Naja and UVA, at 180 strand, London

Robert collaborated with Robert Del Naja of Massive Attack and Matt Clark of UVA in the creation of procedural sound design and production for this exhibit as part of Synchronicity at 180 strand London.

2022-23

Goalkeepers Event 2022

Robert composed an ambient music soundscape for this event in New York held by the Gates Foundation which encourages amazing projects which make progress with the UN Global Goals. The piece featured excerpts from previous speakers at the event including Barack Obama, Jacinda Ardern, Bill Gates, and Stephen Fry.

He also worked with machine learning software to extract stems and then created a custom remix of a Kate Bush song and cast and curated voice actors to perform a stirring speech about gender equality, which was set to dance at the event.

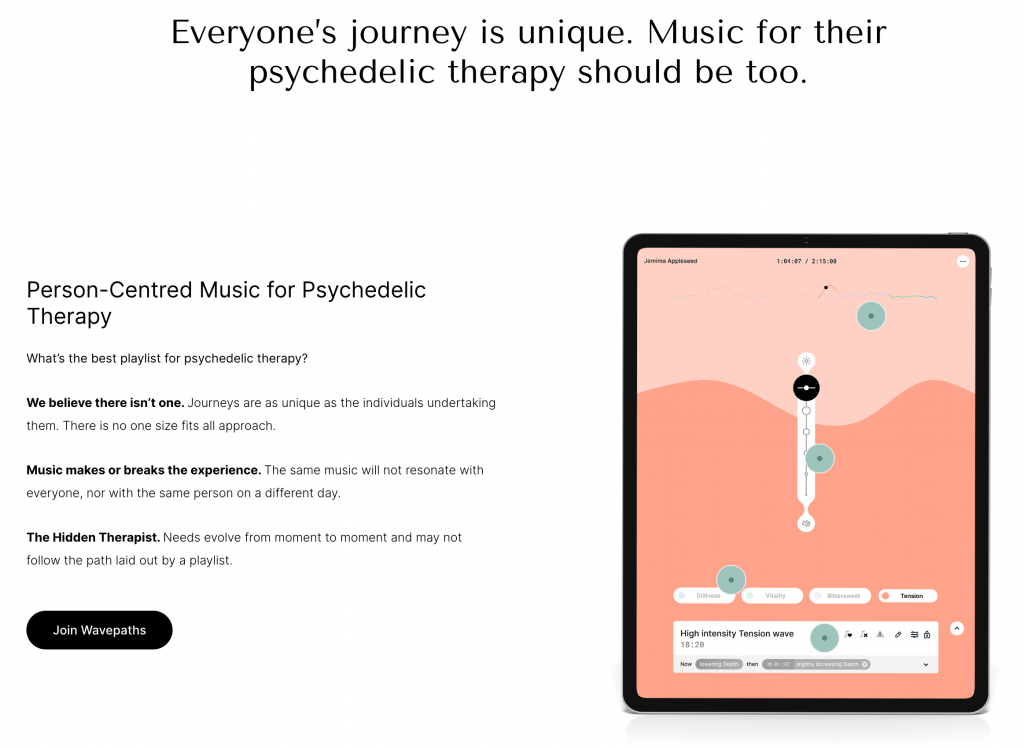

Music Lead and Principle Composer at Wavepaths

In 2022 Robert became Music Lead and principal composer for Wavepaths, managing the team of over 20 artists and composers as well as composing a lot of music for the platform, often in collaborations and remixes with wonderful artists like Jon Hopkins, NYX, Julia Gjertsen, Spencer Doran and Andrea Belfi.

Company : Wavepaths

Lead Creative : Mendel Kaelen

Role : Principal Composer, Music Lead, Generative Music System Designer and Developer

2021

Aviary by Sammy Lee at Tate St Ives – Infinite generative data driven composition 360 live stream

Company : Tate St Ives, Sammy Lee Studio

Lead Creative : Sammy Lee

Role : Co-composer with Ebe Oke

COP26 Opening Ceremony – Voices for Change Film

Company : Project Everyone

Director : Richard Curtis, Hannah Cameron

Role : Composer

Voices for Change World Online Spatial Audio Soundtrack Website

Company : Google Arts and Culture with Project Everyone

Director : Richard Curtis, Hannah Cameron

Role : Composer

Forest For Change Global Goals Pavilion

Generative Spatial Audio Soundtrack, Installation

Company : Project Everyone, London Design Bienale

Lead Creatives : Es Devlin, Brian Eno, Richard Curtis, Hannah Cameron

Role : Composer

Robert composed a 3d generative soundtrack to the Global Goals Pavilion in Es Devlin’s Forest For Change, the centerpiece of the London Design Bienale 2021. He worked alongside Brian Eno who created the bird soundscape.

The Global Goals are 17 goals set by the UN for the future of mankind. Project Everyone, a company created to champion the goals, commissioned Robert to create this soundtrack.

The pavillion installation allowed members of the public to record their own message which was incorporated into the composition in 3d sound.

Singers Daisy Chute and Lydia Clowes provided their vocal talents singing and whispering the goals which were fed through a spatialised sound engine featuring granular resynthesis.

A Dozen Chains – Short Film

Winner of London Sci Fi 48hr Film Festival

Company : Little Big Problem Productions

Director : Rachel Friere

Role : Composer, Mixer

Wavepaths – Adaptive Generative Music for Psychedelic Therapy ( 2020-ongoing )

Wavepaths is a platform using adaptive personalised and often generative music for use with psychedlic therapy treatment and a number of other use cases.

Company : Wavepaths

Lead Creative : Mendel Kaelen

Role : Composer, Music Lead, Generative Music System Designer and Developer

2019

Stitched ft London Contemporary Orchestra – Audience reactive composition, performance and spatial audio installation

Commissioned by : Bridgepoint Rye

Director : Tim Hopkins

Role : Composer

This piece, performed for the opening of a new installation by Tim Hopkins showing the Hastings Tapestry, was audience adaptive. As the audience moved around the hall the music altered and changed in response. I performed it with a small ensemble from the London Contemporary Orchestra of Viola Da Gamba, Alto Flute, Violin and Sackbut.

The Eternal Golden Braid – audience adaptive composition collaborating with AI, performed by the LCO soloists at Barbican

Commissioned by : The Barbican

Lead Creatives : Marcus Du Sautoy, Joana Seguro, Chris Sharp

Role : Composer

I composed a piece which ended this performance lecture by Marcus Du Sautoy at the Barbican on March 9th 2019.

In a pre-recorded interview with Douglas Hofstadter, whose book Gödel, Escher, Bach inspired this event, Du Sautoy posits Bach as a musical coder, applying rules to musical material to grow something complex and beautiful. His works were then fed through a machine learning process created by computational artist Parag K Mital, which uses the data in Bach’s writing to ‘compose’ a piece of its own. I then adapted and developed some of the AI output into a piece which could react to the audience.

The piece was performed by a string trio of London Contemporary Orchestra soloists.

2018

WDCH Dreams with LA Phil, Refik Anadol and Google Arts & Culture

Company : LA Philharmonic

Lead Creative : Refik Anadol

Role : Composer, Sound Designer, Algorithmic Systems

I collaborated to create this soundtrack with Kerim Karaoglu, Parag Mital and Refik Anadol studio on this project with the LA Phil for their 100th anniversary gala event. The music and sound design features archival audio from the huge catalogue of the LA Phil, along side machine learning generated new audio material, using a wide range of cutting edge techniques and also new original music.

The event itself was a vast projection mapped spectacle on all surfaces of the Walt Disney Concert Hall in Los Angeles. The visuals were also generated using many elaborate machine learning and data art techniques.

Bose AR

Custom Audio Augmented Reality Musical Score Library

Company : Bose

Lead Creatives : Todd Reily

Role : Composer

DAU Project – Adaptive Audio Augmented Reality App

Lead Creative : Rob Del Naja, Ilya Khrzhanovskiy, Alexei Slusarchuk

Role : Augmented Sound and Music Experience Design and Development

Fantom x Mezzanine – Massive Attack

Company : Fantom

Lead Creative : Rob Del Naja / Massive Attack

Role : Remixer, Producer, Algorithmic music system

I created custom algorithmic remixes for this sensory remixer app release of Mezzanine the seminal album from Massive Attack on its 21st anniversary. This was a collaboration with Rob Del Naja, Marc Picken, Andrew Melchoir, Yair Szarf, The Nation, Joel Vaiser, N2K, Hingston Studio and Blokur. The app lets the user navigate custom remixes of each song based on how they interact with it using your phone sensors. For example you can film an Instagram video and the music adapts in realtime to the activity in the image. It also responds to many other sensor inputs including movement, touch, face expression recognition, and some secret hidden easter eggs. The app is an early experiment exploring in the possibilities for music in augmented reality. It also tracks all playback of the core musical stem material in the blockchain.

Google Pixel Your Luxury Portrait at Selfridges London – Adaptive Music Installation

Company : Google, TEM

Creatives : Joanna Tulej, Tom Seymour, Chris Davenport, Chris Pearson, David Li, Artists & Engineers

Role : Composer, Music System Designer and Developer

2017

Stealth VR project

Company : [REDACTED]

Director : A very well known music video director

Role : Custom procedural DSP designer

Pixel Records Sci Fi Supermall – Soundtrack to event in Berlin composed with AI systems. With Boiler Room and Google.

Company : Google x Boiler Room

Lead Creative : Joana Seguro

Role : Composer using AI systems

I composed music using AI / machine learning systems for a soundtrack to an amazing launch event in Berlin by Boiler Room and Google for their Pixel 2 phone.

We used many machine learning processes in the music composition – including Google Magenta / Tensorflow. There was a website system which took recordings of peoples voices and through a Tensorflow style transfer type algorithm created a new snippet of music from the contour of their voice.

I worked together with my assistant Franky Redente on the music and the neural synthesis was built by Parag Mital.

Rebel Queen – soundtrack for VR experience. Richard Mills & Kim-Leigh Pontin, Sky UK

Company : Sky

Lead Creative : Kim Leigh Pontin

Role : Composer

This was a soundtrack to a VR experience about Queen Nefertiti and ancient Egypt. It won a ‘Commendation’ at this year’s Venice Biennale. The soundtrack is adaptive to user interaction at a number of points in the narrative.

VR Opera addressing Conflict Resolution – VR Research

Company : Tim Hopkins, Arts Council England, supported by V&A, Oculus, Valve, Sussex Uni

Lead Creative : Tim Hopkins

Role : Composer, Technologist

2016

Ecliptic – Infinite 3d generative soundscape – for L-ISA Island by L-Acoustics

Company : L-Acoustics L-ISA

Lead Creative : Christian Heil

Role : Composer, Generative Systems Designer and Developer

I was commissioned to write an infinite generative soundscape by L-Acoustics for their incredible Island sound system. Reminiscent of the soundtrack to a strange very long slow paced art sci fi movie – it is a hypnotising piece perfect for calm contemplation and focus.

The system sounds amazing and features 23 speakers completely surrounding the listener, 2 subwoofers and 24 power amplifiers!

On Your Wavelength 2

Company : Marcus Lyall Studio

Lead Creative : Marcus Lyall

Role : Composer

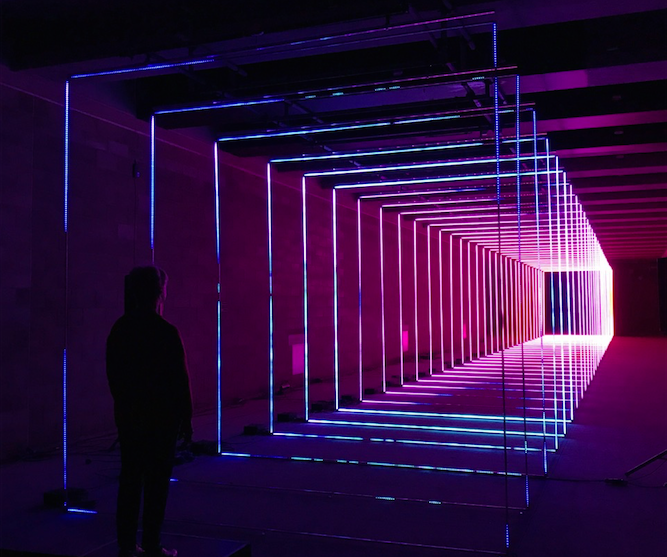

I did a new adaptive soundtrack for this piece which has been dramatically developed since its last incarnation over a year ago. This version uses over 30000 LEDs controlled by your brain – the music system creates a unique composition which you ‘play’ using how much you concentrate.

BBC Click Live

Company : BBC

Lead Creative : Nick Kwek

Role : Composer, Adaptive Music Systems Developer

I composed an adaptive soundtrack for part of the BBC Click Live show in November 2016. The score adapted to the biometric data of the audience. It became more intense as their emotions became heightened. This project used data from XO Studio’s wristband sensors which 80 members of the audience were wearing.

Jibo – Personal Robot

Company : Jibo Inc.

Lead Creative : Cynthia Breazeal

Role : Musical Procedural Speech Sound Design

Pzizz, Sleep App Music System

Company : Pzizz

Lead Creative : Rockwell Shah

Role : Adaptive Music Systems Designer and Developer

I worked with Pzizz to create the latest version of their hybrid music system. The app features human composed but algorithmically remixed music and sound. In this case the human composer was the very talented Ethan Cohen. The system learns over time to prioritise to music which the user likes. The app has over 500,000 users and even J.K.Rowling is fan! Try it out on iOS

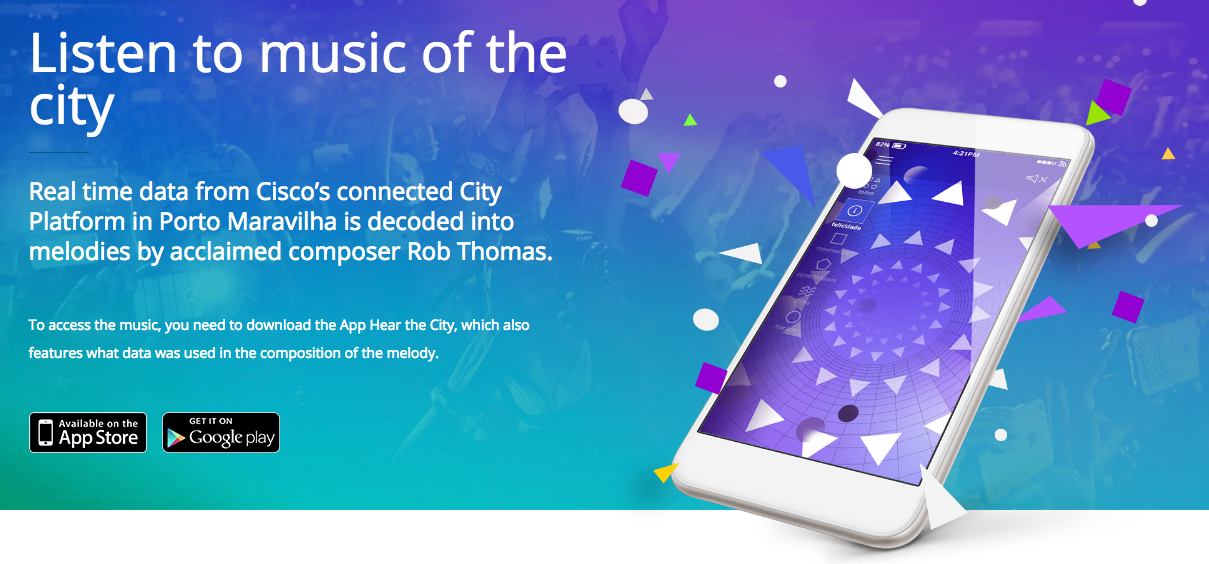

Hear the City App, CISCO – Adaptive Soundtrack to the city of Rio – Porto Maravilha, Rio Olympics 2016

Company : yDreams Brasil, CISCO

Lead Creative : Daniel Prado

Role : Composer, Music System Designer and Developer

In Aug 2016 I was commissioned by CISCO to compose an adaptive soundtrack to the city of Rio – Porto Maravilha as part of CISCO’s connected city platform. This was part of their 2016 official supporter of the Rio Olympic and Paralympic games. The soundtrack creates music in realtime, responding to live city data about connectivity, traffic, happiness, weather and time. This project was in conjunction with yDreams Brasil. More details here.

Fantom Sensory Music App, with Massive Attack

Company : Fantom

Lead Creative : Rob Del Naja / Massive Attack

Role : Remixer, Producer, Creator of custom algorithmic music system

I worked together with Rob Del Naja, Marc Picken, Andrew Melchior, Yair Szarf and The Nation on this sensory music experience app which features new material from Massive Attack. The app analyses live video image from the device camera, the users heart rate ( via the Apple Watch ), accelerometer activity, time of day and social media streams to create a unique remix of the music for each listen of each user.

Check out an interview I did along side Rob Del Naja from Massive Attack in VICE about the project here

Biobase, Mindful Breathing App, by Biobeats

I created the algorithmic adaptive music system for this app which helps you do breathing exercises to reduce stress. The app monitors your Heart Rate Variability by using the light and camera and a custom machine learning algorithm, to find out how well you are doing the exercise. If you are doing it well – the music expands in reward. The music system algorithmically creates a different arrangement for each session.

In 2020 Biobeats were acquired by Medopad / Huma.

Company : Biobeats

Lead Creatives : David Plans, Davide Morelli

Role : Composer, Music Systems Design, UX design

On Your Wavelength, by Marcus Lyall in collaboration with Robert Thomas and Alex Anpilogov

An interactive laser and music composition you control with your mind. On this project I collaborated with Marcus Lyall, the artist responsible for the award winning stage visuals for the Chemical Brothers and Metallica. It was commissioned for MERGE 2015 arts festival in Bankside, London. Participants wear an EEG headset which monitors their attention levels. This then controls many aspects of the lasers and the soundtrack. Alex Anpilogov created a method to directly control Marcus’ laser compositions based on the EEG signals and I composed and built the adaptive soundtrack using Pure data. More details here.

This piece was exhibited from Sept 18th to Oct 18th 2015.

Company : Marcus Lyall Studio

Lead Creative : Marcus Lyall

Role : Composer

Mindsong, EEG controlled meditation app with Mikey Seigel

Mindsong is an app which helps you meditate better. It uses meditation signals from an EEG headset to control a realtime adaptive music soundtrack which I composed. This project was created by Mikey Siegel and Beau Silver is tech lead. The project was built in Pure data and distributed using LibPd on iOS.

Company : Mindsong

Lead Creative : Mikey Siegel

Role : Composer, Music Systems Design and Software Developer

The Brain Show, National Geographic Channel

I composed adaptive music for and sound design for this virtual reality exhibit for the National Geographic show Brain Games. Visitors spoke their name into the software and had their face scanned in 3d. Then they wore an Oculus Rift headset which incorporated an EEG headset. This allowed them to explore the power of their brain in virtual reality. As they concentrated more the music became more intense and their head movements and visual focus re-constructed the geometry of their face around them. It featured 3 different adaptive music compositions. Each piece reacted to many things, including brain activity type, attention, head movement and body position. The system was built in Pure data.

This project was with Xister and Artists & Engineers with procedural VR visuals created in VVVV by Will Young.

Company : Xister / Artists & Engineers

Lead Creative : Sarah Grimaldi, Louis Mustill

Role : Composer, Music Systems Design and Software Developer

Biobeats, Adaptive music composition and sound design, iOS, Android

I am working with Biobeats to create engaging adaptive audio experiences which are healthy. Get on Up is an app which makes any music react and adapt to your running pace in realtime. Hear and Now is a mindful breathing app which helps you reduce stress levels by monitoring your heart rate. Both apps sync data to the BioBeats machine learning API which creates realtime feedback on health. They were built in Pure data and distributed using LibPd on iOS.

Company : Biobeats

Lead Creative : David Plans, Davide Morelli

Role : Composer, Music Systems Design and Software Developer

“Future User” in DIS magazine by Lil’ Data – PC MUSIC

A lil collaboration with @lildata @Atour, @enzienaudio. The first web deployment of an interactive piece using the Heavy audio procedural audio pipeline by Enzien Audio. This deployed a Pure data patch, via the Heavy compiler into a browser to run on the web. In addition to this, thanks to @atour ‘s code, multiple users can change the music simultaneously by toggling words in the text on a webpage. All the audio is running procedurally in the browser.

Try it here : http://dismagazine.com/disco/mixes/74428/lil-data-future-user/

Source : https://github.com/lil-data/futureuser

Role : Composer, Music Systems Design and Software Developer

Arboreal Lightning – Imogen Heaps Reverb Festival

https://www.youtube.com/watch?v=Zs2oY7AbfnU

Commissioned by Imogen Heap for her Reverb 2014 festival. I worked with Artists & Engineers and Sennheiser to create an adaptive and interactive 3d soundscape using ambisonics for the large LED tree structure designed by Atmos which was the centrepiece of the festival.

Visual software design for the tree was by Adam Stark.

Company : Reverb Festival, Artists & Engineers, Atmos

Lead Creatives : Imogen Heap, Atmos, Louis Mustill

Role : Composer, Music Systems Design and Software Developer

Projects while Chief Creative Officer of RjDj :

Imogen Heap – Run-Time App.

This was a prototype adaptive music running / jogging app I worked on with Imogen Heap. It was the first example of an adaptive music system specifically for running.

Company : RjDj / Reality Jockey Ltd

Lead Creative : Imogen Heap, Michael Breidenbrucker

Role : Reactive Music Production, Music Systems Design and Software Developer

The Dark Knight Rises Z+ App, iOS.

https://www.youtube.com/watch?v=cmgrLrCm8F8

The Dark Knight Rises app featured multiple cues from the movie reimagined to work adaptively and respond to many aspects of your life. It also contained The Bat a very dynamic sound design piece and multiple reactive remixes including Junkie XL.

Company : RjDj / Reality Jockey Ltd

Lead Creatives : Christopher Nolan, Hans Zimmer and Michael Breidenbrucker

Role : Remixer, Reactive Music Production, Music Systems Design and Software Developer

Dimensions – Augmented Audio Reality game, iOS.

http://www.youtube.com/watch?v=7-caFZJ1-oM

Pioneering Audio Augmented reality game by RjDj.

I created the music, sound design, directed voice acting and designed the music system. The app also featured the Ghost Dimension composed by Hans Zimmer.

Company : RjDj / Reality Jockey Ltd

Lead Creative : Michael Breidenbrucker

Role : Composer, Reactive Music Production, Music Systems Design and Software Developer

Inception the App – Augmented Audio Reality App, iOS.

Official app experience extension of the film Inception.

With Christopher Nolan, Hans Zimmer and Michael Breidenbrucker.

6 Million + downloads worldwide. No 1 in the App store in many countries.

Company : RjDj / Reality Jockey Ltd

Lead Creatives : Christopher Nolan, Hans Zimmer and Michael Breidenbrucker

Role : Remixer, Reactive Music Production, Music Systems Design and Software Developer

RjDj iPhone App, iOS

Reactive Music Production with many artists including AIR, Carl Craig, Little Boots, Bookashade, Jimmy Edgar, Chiddy Bang, Fabrice Lig, Kirsty Hawkshaw, Acid Pauli / Console and many more.

Company : RjDj / Reality Jockey Ltd

Lead Creative : Michael Breidenbrucker

Role : Remixer, Reactive Music Production, Music Systems Design and Software Developer

Love by AIR iPhone App, iOS.

Working with AIR to produce an interactive version of their song Love. Released on Valentines day.

Little Boots Reactive Remixer iPhone App, iOS.

Working with Little Boots to produce a interactive versions of 3 songs from her debut album Hands.

Company : RjDj / Reality Jockey Ltd

Lead Creative : Michael Breidenbrucker

Role : Remixer, Reactive Music Production, Music Systems Design and Software Developer

Rj Voyager iPad App, iOS.

http://www.youtube.com/watch?v=hvWFv954IBU

Reactive Music Production / UX design on this touch control synthesis / music exploration app for the iPad.

Company : RjDj / Reality Jockey Ltd

Lead Creative : Michael Breidenbrucker

Role : Remixer, Reactive Music Production, Music Systems Design and Software Developer

Kids on DSP iPhone App, iOS.

Reactive Music Production on this, the first iPhone only album as app release. This app pioneered the release of an album as mobile app software.

All projects at RjDj were built using RjDj’s series of custom ports of Pure data to iOS. These had some commonality with LibPd but also featured other optimisations specific to iOS and the apps in question.

Reuters News Video about Kids on DSP

Company : RjDj / Reality Jockey Ltd

Lead Creative : Michael Breidenbrucker

Role : Remixer, Reactive Music Production, Music Systems Design and Software Developer

Older projects :

Fluidity – Wii game early stage soundtrack, Curve Studios, eventually published by Nintendo

Explodemon! – PC / PS3 GDC announcement trailer soundtrack, Curve Studios

Czech National Radio – Radio news, weather and traffic themes

Revlon – Advertising music, with Sugar Cube Studios

Equanimity – Sound installation audio engineering for the first official holographic portrait of HRH Queen of England, with Leanda Brass

Visit Mexico Metaverse Soundtrack – The first soundtrack to the official virtual metaverse presence of a country, commissioned by Mexico

Costa Rica Metaverse Soundtrack, commissioned by Costa Rica

PARSEC – Metaverse multi user voice reactive music game, commissioned by Manning Press

The Dunes – co-writer, arranger, producer in this duo with singer Sarah-Jane Taylor. On Dave Rowntree’s ( Blur ) Transistor Project Label